Is AI Only Problematic if We Can See It?

Thoughts on invisible threats and tangible feelings.

Dear Reader,

Last week, I uploaded a video to our Instagram account. Usually, our videos feature only voice-over and text (or a person we’re visiting as the protagonist), but this time I experimented with an AI-generated anchor person. This avatar was white, slim, with a defined waist, blond hair, sharp bangs, and a long mullet, along with a soft facial structure and light beard stubble. Its voice is labeled as female in the AI program. Against the binary constraints of the dataset, I created a slightly uncanny, nonbinary version of myself.

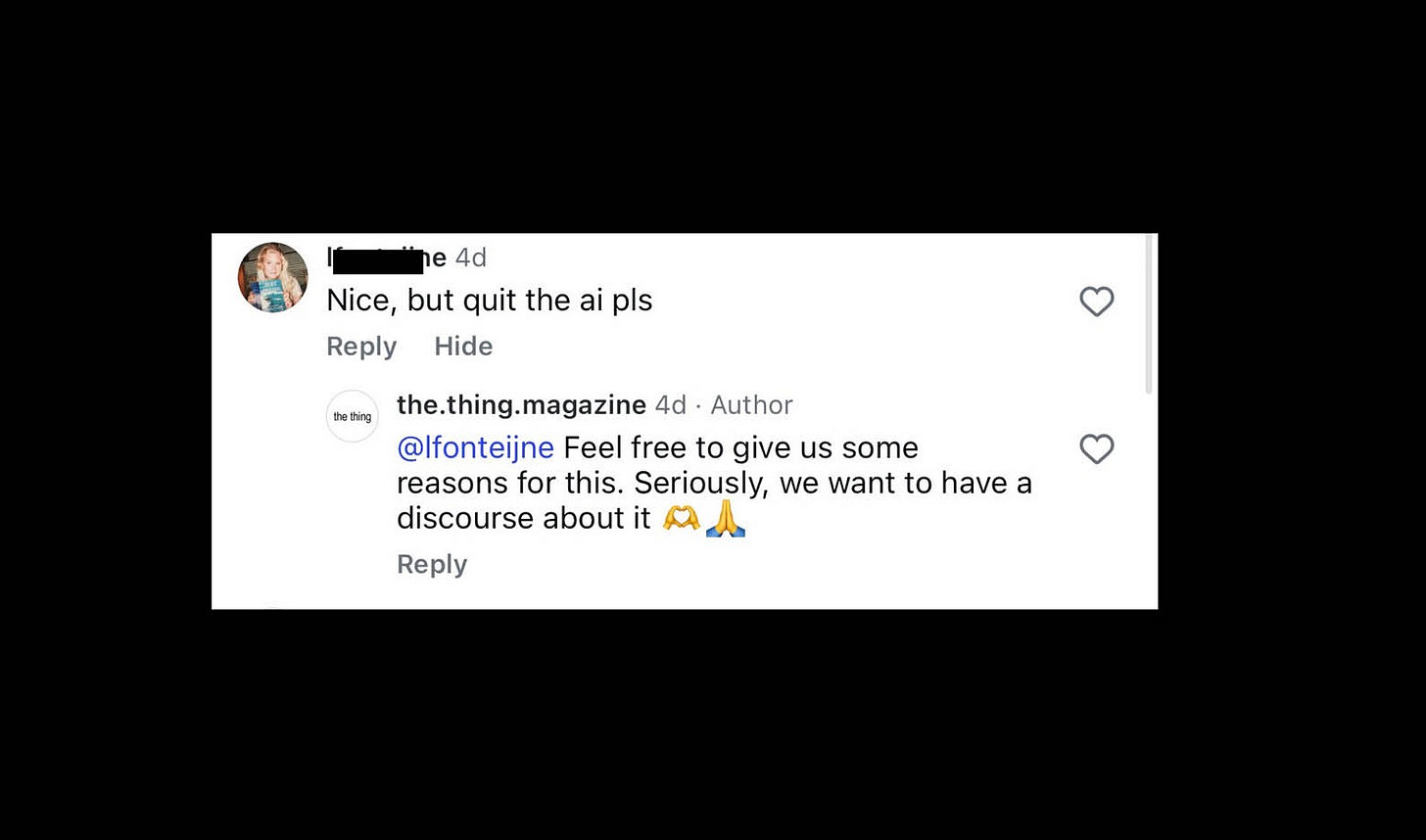

Judging by some of the comments on the first video, the decision to “hire an AI” is highly controversial. And it should be.

I did this experiment for multiple reasons: I don’t want to be an anchor person on the internet myself, yet the algorithm is pushing these kinds of videos right now. I don’t want to be visible as a person on the internet, a space where anonymous people can say practically anything they want without facing legal repercussions (especially since Mark Zuckerberg decided that free speech equals free hate speech). Furthermore, I’m self-employed, and everything I do for work already kind of dominates my private life. I don’t want my body involved in that dynamic on top of everything else.

I am far from being anonymous. My personal profile is linked in the Instagram bio of the thing Magazine, and in Germany every website (people in the U.S. might want to sit down now!) has to provide the name and legal address of the person responsible in the imprint. So I very much take responsibility for the things we publish – also on a personal level.

Back to the anchor person.

AI is frightening. I totally get that. The companies behind it often use data they don’t own to create new outputs that grant no credit to the original creators. It consumes enormous amounts of resources, might replace jobs, and at the same time reproduces biases and all kinds of discriminatory patterns.

Does that mean I cannot use AI to create a fictional anchor person?

AI is not AI.

“Even defining the term is tricky. As media theorist Peter Weibel puts it, ‘artificial intelligence […] does not exist.’ Instead, he describes AI as a field made up of an ‘ensemble of machines, media, programs, algorithms, hardware, and software.’”

– Merzmensch. Ki-Kunst (Digitale Bildkulturen). Berlin: Verlag Klaus Wagenbach K, 2023, p. 9.

AI is already surrounding us everywhere and in ways that are far more threatening than my anchor person. Just recently, the police in Germany started using face-recognition AI to analyze video footage from train stations. They say that it can help prevent crime and save lives, for example by finding missing people. But the potential negative consequences are obvious: We are all trackable, and AI can make mistakes too. After all, it learns those mistakes from its creators.

In a podcast by German newspaper Die Zeit, the AI expert and journalist Kai Biermann described this tension using the term Rechtsgüterabwägung. It’s a heavy German word describing the legal weighing of potential benefits (like a new technology) against potential losses for citizens. Or: Do we weigh the potential of saving lives with the help of AI surveillance higher than the right to remain anonymous in public spaces?

In the case of my anchor person, one could argue that I could simply not use it. Fair enough. But what exactly is the loss if I do? Resources, for sure. And yes, I do believe in the climate crisis and I want it to stop. I’ve never owned a car, I go everywhere by bicycle or train, I live mostly vegetarian, I turn off the lights when I leave a room, and so on. But we also know that individualizing structural problems won’t solve them.

So what’s the gain? For me, it’s two things.

First: experimenting with the unknown.

Second: expanding the possibilities of what I can do and become.

We are a tiny media business. Without AI, we couldn’t do much of what we’re doing, even without the anchor person. As we’re two non-native speakers publishing texts in English, every text we publish gets a final AI edit for checking grammar and style. Is that somehow better than using an AI anchor person? Probably not. But nobody ever noticed it.

But what’s the difference? My guess would be: One is visible, the other one is not.

And here comes my hot-take: Humans are still pretty simple creatures (myself included). We are fearful, emotionally led beings, lost in space. Most things we don’t know, we don’t want. And seeing something makes it feel more real to us. Biologically and socially, that’s how we learned to navigate the world. I think this has to change drastically. Because AI can now create fake images and videos so easily, we need to become even more critical of the things we don’t see:

The AI analyzing cameras in train stations.

The AI scanning your face when you unlock your phone.

The AI linking human data to potential crimes.

The AI selecting targets for military drones.

That’s the AI that actually threatens us.

Don’t get me wrong: this isn’t AI whataboutism meant to distract from my anchor person. I understand why it feels uncanny or morally debatable. But at least this anchor person gives me the chance to experiment with formats for communicating complex topics in a language that many people speak – and that isn’t my mother tongue. It allows me to create a nonbinary performing person who might resemble the person I sometimes wish I were in this world but am not brave enough to become. It creates an avatar that doesn’t take hate comments personally and stays calm even when the comment section gets heated.

As journalists, we make it a point to be transparent about what may already be obvious to many: In the captions, we clearly explain which parts of the video are AI-generated and which are not. However, the impulsive, emotional nature of online reactions often leads people to comment before reading any additional information.

We also need to be precise in how we talk about AI, as Emily M. Bender and Nanna Inie argue. They refer to “anthropomorphizing language”, language that uses metaphors originally intended for human interactions. As they put it, “‘AI’ is not your friend. Nor is it an intelligent tutor, an empathetic ear, or a helpful assistant. It cannot ‘make up’ facts, and it does not make ‘mistakes’. It does not actually answer your questions.”

In that sense, using AI to create something like an anchor person may itself be a form of anthropomorphizing visualization, and, in turn, a kind of glitch within this emerging and potentially dangerous emotional framing of AI as a personal friend or assistant.

AI is a controversial topic. I understand that. It’s frightening, and in many ways it’s already a technology that individuals can no longer control. Personally, I deal with this uncertainty not by avoiding it at all costs.

Instead, I try to experiment, debate with people, stay informed, curious, and critical.

Yours dearly,

(the real) Anton

P.S. We’d really love to hear your constructive feedback on this!

In a matter of time, I think we will not find it as offensive. But, as of now it feels as though it is about 'trust'. There is a tendency (thanks to ai slop and all the misinformation) to assume all of it is sheer nonsense. I'm not for it tbh, I just feel as a society we need to prioritise other spaces for more 'real' forms of engagements & conversations.. but I totally get your point in wanting to use it to say things and keep your face out of the picture. I want to generate an avatar to share dad jokes but I have refrained - not because I think my joke aren't funny:)) I just don't want to invest so much in these technologies right now (why? its separate post!) I also wanted to generate an audio via 11labs for my writing, knowing that people don't read as much and as an audio-i could get more people to listen to the message. What was more imp? Getting the message across or caring too much on the 'how'. Then I heard McLuhans voice in my head 'medium is the message'! haha! its true, I can't speak for everyone but for me, this technology, (not all of it) but the video, audio and LLMs have been built in such untrustworthy ways.. its leaves a bad taste.. until we have completely been robbed of our own judgment to not care - which I think is the more likely scenario.

America is sort of anchored by AI doomerism—Meanwhile, China is going full speed ahead on AI art and books.

https://nathankyoung.substack.com/p/notes-on-the-cultural-revolution?r=2kp7ol